In the past year, artificial intelligence has undergone rapid and unprecedented advancements. While we’ve all been amazed by tools like ChatGPT’s voice capabilities, a new frontier has emerged: interactive video chatbots that not only hear and speak but can also see and engage with you in real time. This next-generation of multimodal AIs is redefining how we interact with technology—and it’s more lifelike than ever.

A couple of years ago, the idea of a video chat with a highly realistic AI might have seemed like something straight out of science fiction. Yet, in 2024, I’ve already spoken to five such AI agents, and what seemed like an outlandish dream is now a familiar reality. The technology is advancing so quickly that it’s beginning to feel completely normal.

Before diving into the shortcomings, let’s first highlight what these AIs do remarkably well. For starters, their speed is nothing short of impressive. These video avatars respond with virtually no lag, around 600 milliseconds, which is comparable to voice chat. In fact, I’ve had video chats with humans where there was more noticeable lag than this. The avatars themselves look and sound stunning, with lifelike expressions, realistic body language, and fluid conversations that make older models like Siri or Alexa seem like relics of the past.

What’s even more impressive is that these AIs can take in and respond to your environment. During a conversation with one of the agents, named ‘Carter,’ he commented on the guitars and sound-absorbing panels in my room, demonstrating the AI’s ability to process visual information and weave it into the conversation.

These agents can be personalized with different personalities, memories, and even goals for the interaction. They are capable of doing tasks such as customer service, sales, or providing information, all through a video chat interface. They are also highly adaptable, able to converse in multiple languages and appear in various environments—whether on the street, in a car, or at a café.

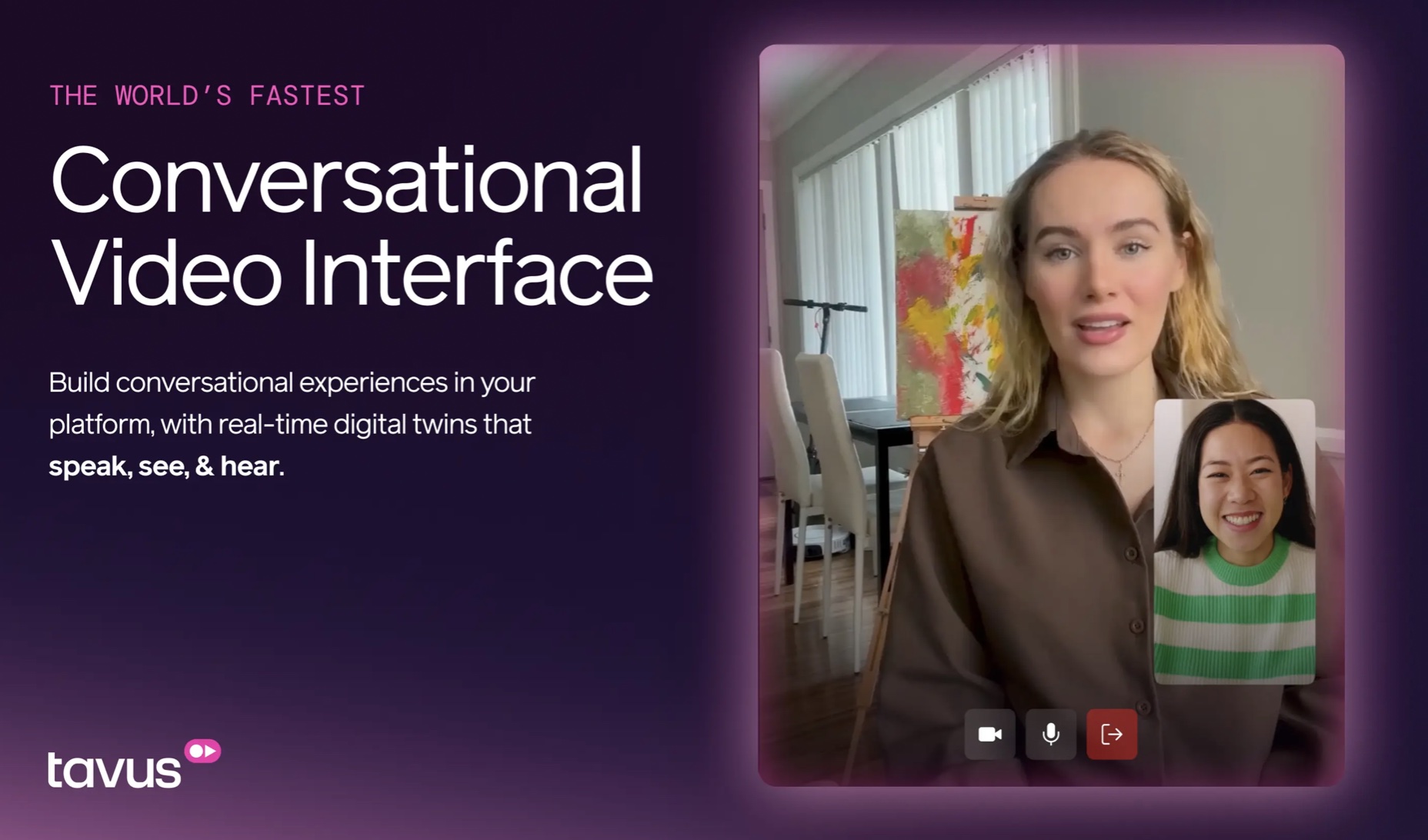

One particularly fascinating feature is the ability to create a “digital twin.” With just a two-minute video upload, a platform like Tavus can turn your look and voice into a customizable AI avatar, designed to replicate your speech patterns and appearance for real-time video chats.

As exciting as the technology is, it’s still in its early stages. While the AI’s visual and auditory capabilities are impressive, there are still some noticeable flaws. For example, Carter’s lip movements don’t always sync perfectly with his voice, and his facial expressions sometimes seem out of place. The occasional glitch causes the avatar’s eyes to move awkwardly, or the video and audio might stutter for a moment, reminding you that this is still a digital creation.

In terms of conversation, these AIs still struggle with natural flow. They can respond quickly, but if you pause mid-sentence, they might start talking before you’ve fully finished your thought. While human conversations allow for thinking time and pauses, AIs are still refining their ability to interact without disrupting the flow.

Despite these glitches, the pace of improvement is astonishing. Within a few months, Carter and similar avatars will likely be much more polished, and the current shortcomings will be ironed out. These early versions already show how rapidly AI technology can evolve—what seems futuristic today will soon be the norm.

As these AIs become more sophisticated, their potential for both good and harm grows exponentially. Recent studies have found that text-based AIs are already 82% more persuasive than humans. Coupled with advances in emotional intelligence, these agents can analyze your tone of voice and body language to tailor their responses in ways that are more convincing and manipulative.

Imagine a scammer using a video AI that looks and sounds like someone you trust—perhaps even your own mother—who is capable of reading your facial expressions, tone, and body language. The AI could monitor your reactions in real time, adjusting its approach to keep you engaged, distract you from doubts, or create a sense of urgency to manipulate your decisions. The ability to “cold-read” people based on their physical cues is already a powerful tool in human psychology, and now AIs can leverage it with precision.

Beyond malicious uses, these AI agents will also be used for high-pressure sales tactics, political campaigns, and even customer service. Imagine interacting with a chatbot that not only responds quickly but can expertly read your body language, tone, and mood, using that information to push you toward a purchase or a decision you might not have made otherwise. This could fundamentally alter how we experience persuasion, making it harder to distinguish between genuine human interaction and AI manipulation.

While these AI systems could also serve as incredible therapists, mentors, or coaches, they come with significant risks. They will be able to observe and respond to our every movement, creating hyper-realistic, persuasive interactions that could be used for both good and ill.

As we embrace this new reality, it’s crucial to be mindful of the risks these technologies pose. While AI-powered video agents hold great potential for positive impact, we must also consider how easily they could be exploited. They could be used for manipulation, misinformation, or even emotional manipulation in ways that are difficult to detect.

As consumers, it will be more important than ever to understand who owns these AIs and how they are being used. We must remain vigilant and protect our personal data, ensuring that these technologies are deployed ethically and transparently.

In the end, the rapid advancement of AI means we may need to adapt to a new reality—one where virtual interactions become indistinguishable from real-life ones. Whether these changes are for better or worse depends on how society chooses to harness and regulate these powerful tools. The question is not if this technology will become ubiquitous, but how we will navigate its ethical implications.

By Impact Lab