By Futurist Thomas Frey

We’re building the future one incompatible system at a time, and nobody seems to notice we’re heading for disaster.

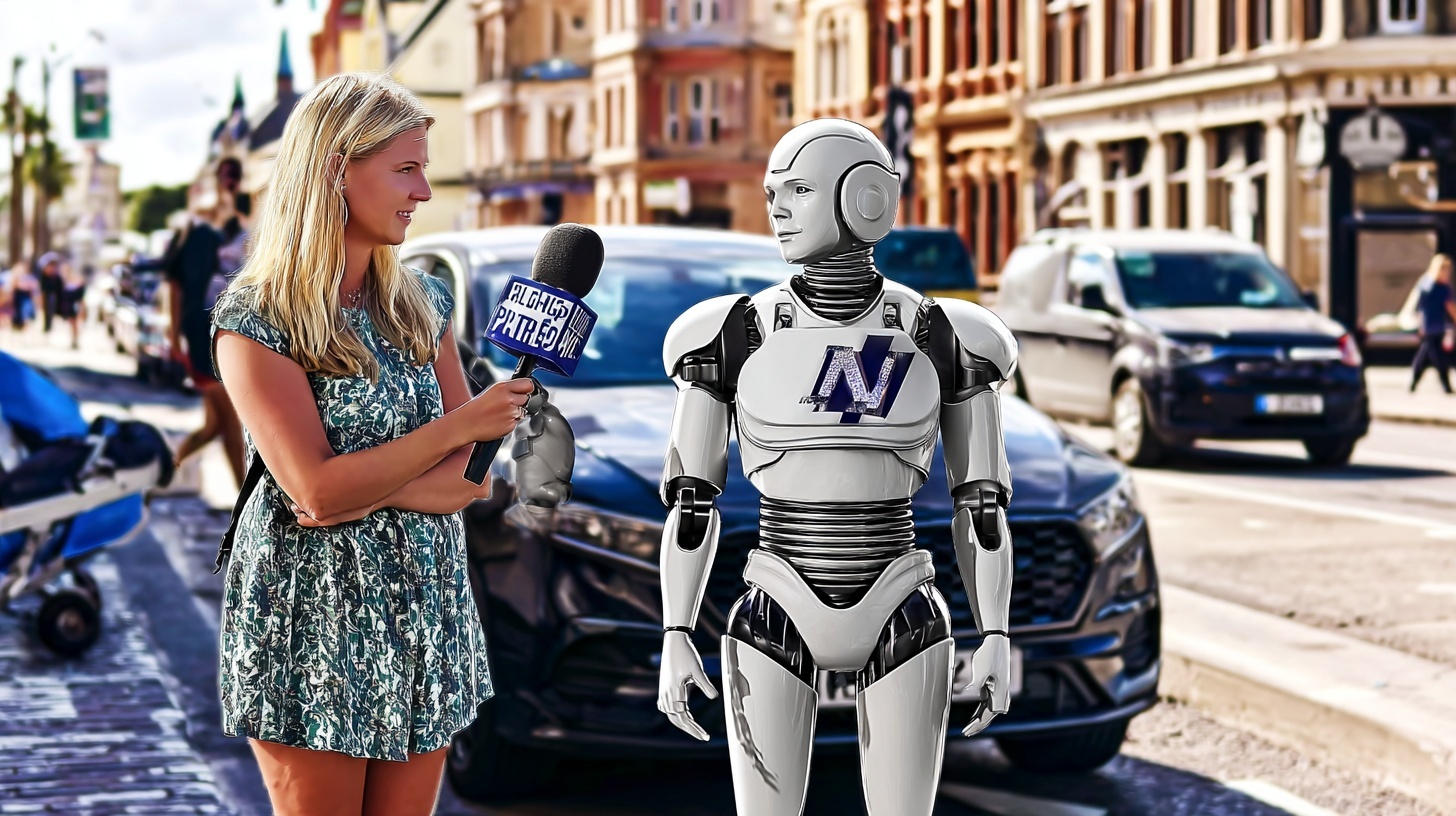

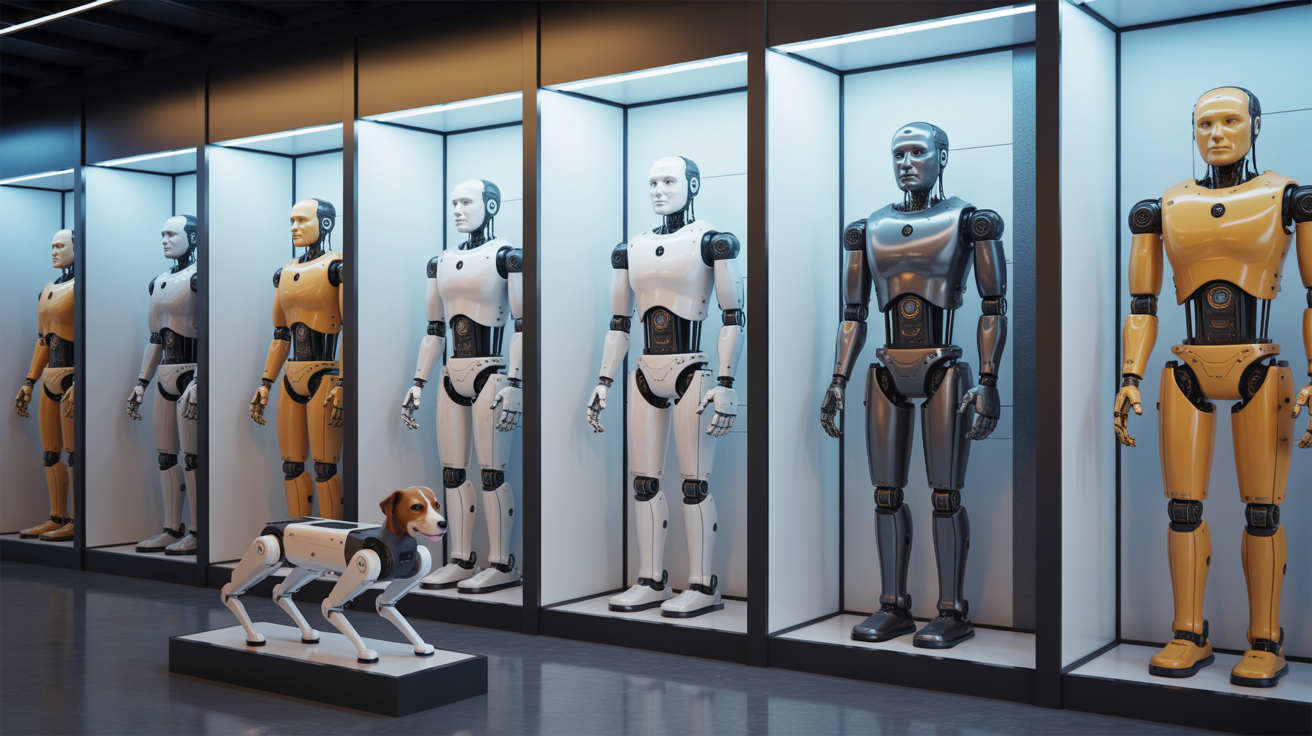

Right now, across the world, brilliant engineers are creating autonomous vehicles, delivery drones, warehouse robots, surgical robots, agricultural drones, and thousands of other AI-powered systems. Each one is remarkable. Each one represents years of innovation. And almost none of them can talk to each other.

This is the interoperability crisis, and it’s about to become the defining challenge of the AI era.

Continue reading… “The Invisible Crisis: Why AI Interoperability Will Define the Next Decade”