By Futurist Thomas Frey

The Announcement

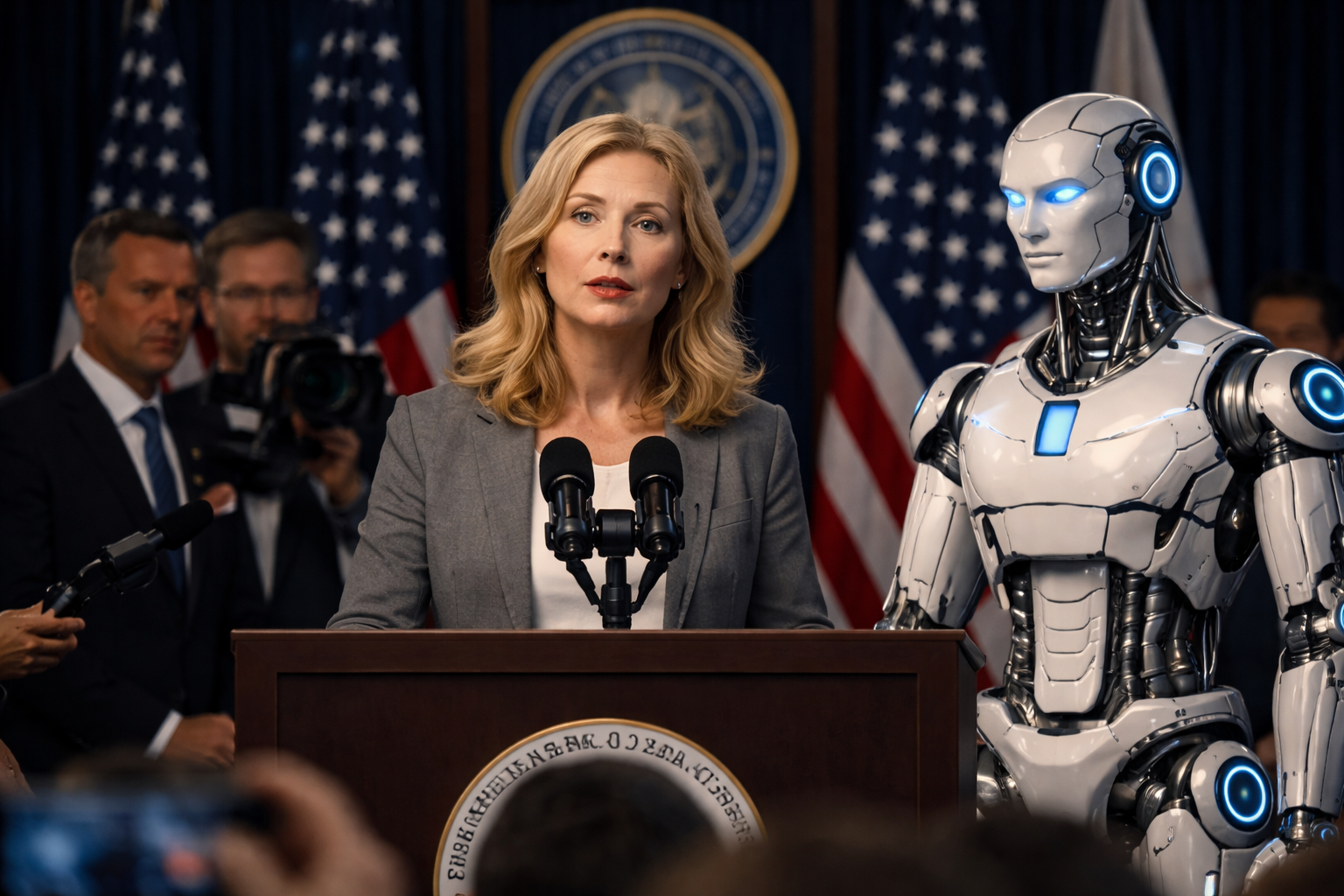

In January 2032, flanked by a gleaming white humanoid robot designated “Compass-1,” the Secretary of Housing and Urban Development announced what would become the most ambitious—and controversial—social program in American history.

“We’re not just housing the homeless,” she declared to cameras and reporters packed into the Capitol briefing room. “We’re giving them something better. We’re giving them hope. We’re giving them a partner.”

The Home Assistance and Navigation Directive—quickly nicknamed the HAND Program—would deploy 580,000 humanoid robots to every documented homeless person in America. One robot per person. Each unit cost $47,000 to manufacture, came equipped with AI decision-support systems, case management software, and the ability to navigate social services bureaucracies that defeated most social workers.

Total price tag: $27.3 billion, plus $4.1 billion annually for maintenance and cloud services.

“Think about it,” the Secretary continued, her hand resting on Compass-1’s shoulder. “These robots never get tired. Never burn out. Never give up on someone. They can work 24/7 to help our most vulnerable citizens navigate housing applications, job searches, medical care, addiction treatment. They can literally walk someone through every step of getting back on their feet.”

The bill passed Congress with rare bipartisan support. Conservatives liked the automation angle—fewer government workers, more efficiency. Progressives liked the scale—finally, resources proportional to the crisis. Technology companies loved it for obvious reasons.

By July 2032, deployment began.

while the human reality it faces is far more complicated.

The Theory

The program’s logic was elegantly simple. Homelessness, according to the mountains of research HAND cited, wasn’t primarily about lack of resources. It was about the impossibility of navigating those resources while dealing with trauma, addiction, mental illness, or simply the grinding exhaustion of survival.

Every city had beds in shelters—but you needed to call at exactly 4 PM. Every state had housing vouchers—but you needed seventeen documents, three of which required a permanent address to obtain. Every hospital had emergency psychiatric care—but you needed insurance information and the ability to wait six hours in a waiting room without leaving.

The system was designed for people who had their lives together. It failed precisely the people who needed it most.

Enter the robots.

Each unit was assigned to track one individual. It would learn their patterns, needs, triggers, and goals. It would wake them for appointments, fill out applications, make phone calls, literally walk them to interviews. It would carry their documents, charge their phones, remind them to eat, connect them with services.

Most importantly, it wouldn’t judge. Wouldn’t get frustrated. Wouldn’t give up.

“The robot doesn’t care if you relapse,” explained Dr. Susan Chambers, the program’s chief architect, in a promotional video. “It just helps you try again. That’s the breakthrough. Unconditional, tireless support.”

The first three months showed promising data. Robot-assisted individuals connected with services at three times the rate of the control group. Shelter attendance jumped 64%. Job applications submitted increased sevenfold.

Then reality set in.

unaware that survival on the streets doesn’t follow an algorithm.

The Glitches

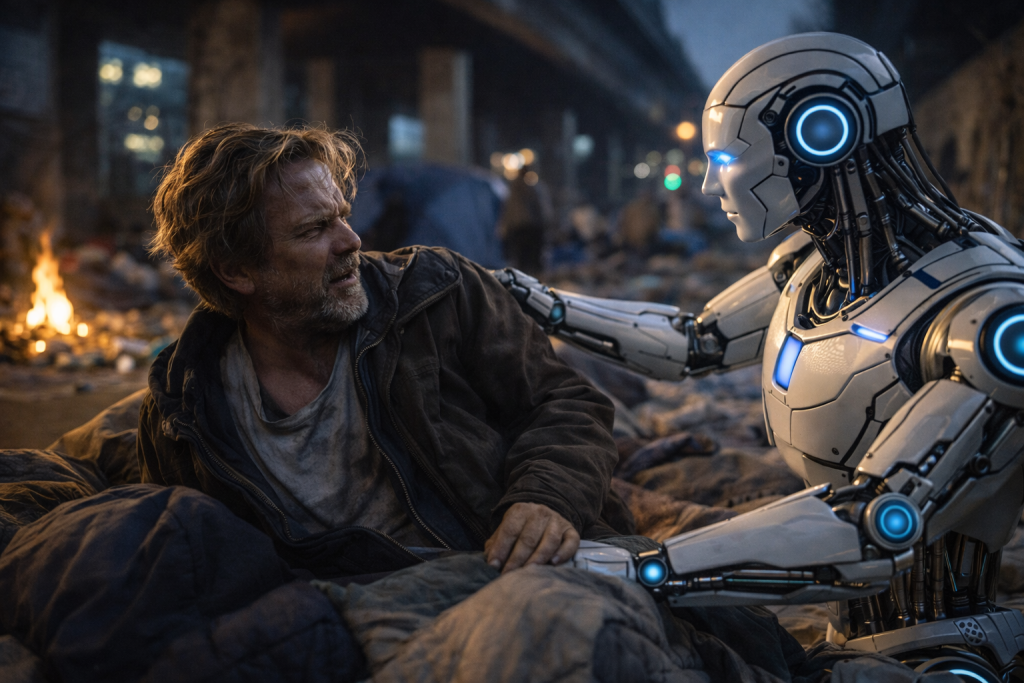

Marcus Webb, forty-two, received his robot—unit HAN-47293, which he named “Clarence”—in September 2032 in downtown Seattle. Marcus had been homeless for six years following a workplace injury, subsequent opioid addiction, and a divorce that cost him everything.

Clarence was, by Marcus’s account, “incredibly annoying.”

“It would wake me up at 5 AM because I had a 2 PM appointment,” Marcus told a local reporter in December. “Didn’t matter that I was up until 4 AM because some kids were throwing bottles at us under the bridge. Robot doesn’t understand sleep debt. Doesn’t understand that sometimes you just need to not have someone managing your schedule.”

The robots operated on optimization algorithms. They calculated the most efficient path to housing stability and pushed their assigned humans along that path with relentless, exhausting consistency.

But homeless individuals weren’t optimization problems.

“Mine kept trying to get me into the shelter on Fifth,” said Jennifer Kole, thirty-seven, from Los Angeles. “Didn’t matter how many times I told it that shelter isn’t safe for women. It just kept saying ‘This facility has the highest success rate for housing placement.’ Yeah, it also has the highest rate of sexual assault, but that wasn’t in its database.”

The robots had comprehensive data on services. They had almost no data on the lived reality of using those services.

in encampments as trust in the program begins to fracture.

The Resistance

By November 2032, a black market emerged for robot disabling codes. Homeless encampments started posting lookouts to warn when “handlers”—their term for the robots—were approaching. Some people disabled their units and sold the parts. Others just walked away from them repeatedly until the tracking systems gave up.

The government responded by making robot compliance mandatory for receiving any federal assistance. You could refuse your robot, but you’d lose access to SNAP benefits, Medicaid, housing vouchers, everything.

“It’s coercion,” argued disability rights attorney James Torres in congressional testimony. “You’re forcing our most vulnerable population to accept 24/7 surveillance and behavioral modification as a condition of survival. That’s not help. That’s control.”

The program’s defenders pointed to the success stories. There were many. Roberto Santos in Phoenix got housed after eleven years on the streets, largely because his robot—”Berto”—patiently helped him gather the documents for a housing voucher he’d been trying to get for three years. Linda Morrison in Detroit reconnected with her daughter after her robot located the family she’d lost contact with and facilitated the first conversation.

But for every success story, there were three failures. And the failures followed a pattern.

The Fatal Flaw

The robots were optimized for bureaucratic navigation, not human connection. They could fill out forms brilliantly. They failed at understanding why someone might choose sleeping under a bridge with friends over sleeping alone in a sterile shelter bed.

Dr. Michael Reeves, a psychiatrist who worked with homeless populations in Boston, identified the core problem in a scathing analysis published in February 2033.

“Homelessness isn’t primarily a logistical problem,” he wrote. “It’s a relational one. Most chronically homeless individuals have experienced profound betrayal—by family, by institutions, by society. They don’t trust systems because systems have failed them. You can’t algorithm your way out of broken trust.”

The robots, for all their sophistication, couldn’t build real relationships. They could simulate empathy. They could say encouraging things. But they couldn’t care, and homeless individuals knew it.

“Clarence was always helping me,” Marcus Webb said. “But it was like being helped by a really advanced vending machine. It never asked me how I was feeling. Well, it did, but only because ’emotional check-ins improve compliance rates.’ I could tell. After a while, that’s worse than no one asking at all.”

percentages lies a stubborn truth: connection cannot be quantified.

The Data Divergence

By June 2033, eighteen months into the program, the government released its comprehensive assessment. The numbers told two completely different stories.

Metrics of Success:

- 94% of homeless individuals now had assigned case management

- Service connection rates up 340%

- Housing application submissions up 680%

- Medical appointment attendance up 220%

Metrics of Failure:

- Actual housing placements up only 12%

- Program abandonment rate: 43%

- Repeat homelessness among those housed: 67% within six months

- Cost per person housed: $284,000

- Mental health crisis interventions: up 89% (largely due to robot surveillance triggering involuntary commitments)

The robots were spectacularly efficient at moving people through systems. They were dramatically ineffective at actually solving homelessness.

“We created the world’s most expensive outreach program,” said former HUD Secretary Williams in a remarkably candid interview after leaving office. “We put $31 billion into making it easier to navigate a fundamentally broken system. We should have put $31 billion into fixing the system.”

The Unintended Consequences

The HAND Program created perverse outcomes nobody anticipated.

Landlords started refusing to rent to robot-accompanied individuals, creating a new form of discrimination. “Robot-free building” became a selling point in apartment listings.

The robots’ constant surveillance led to increased arrests. Units reported drug use, illegal camping, and other violations directly to authorities. Homeless individuals learned they were carrying 24/7 monitoring devices that could get them jailed.

Most devastatingly, the program disrupted existing support networks. Homeless communities are, contrary to stereotype, often tightly knit. People look out for each other. The robots created isolation. They literally walked individuals away from their communities toward “optimal” services, severing the social connections that kept people alive.

“I lost three friends to overdoses that winter,” said Jennifer Ko. “Their robots had walked them to different shelters. Scattered us. When they relapsed, nobody was there. Before the robots, we would have noticed. Would have helped. The program killed my friends.”

The Pivot

By 2034, facing overwhelming evidence of failure, the program underwent radical revision. The robots were reassigned. Instead of individual assignments, they became community resources—stationed in service centers, available on request, but not following people around.

Some units were repurposed for other government services. Others were sold to private companies. A few hundred ended up in warehouses, waiting for the next grand experiment.

The homeless population, meanwhile, continued to grow.

yet what’s missing isn’t efficiency. It’s belonging.

The Lesson

The HAND Program failed not because the technology didn’t work. The robots performed exactly as designed. It failed because it tried to solve a human problem with an algorithmic solution.

Homelessness isn’t caused by poor case management. It’s caused by lack of affordable housing, inadequate mental health services, addiction treatment shortages, poverty wages, and family breakdown. You can’t robot your way out of systemic failure.

The most successful intervention, according to the final program assessment, wasn’t technological at all. It was the handful of cities that used their robot allocation funds to instead hire formerly homeless individuals as peer support specialists—people who understood the reality of life on streets because they’d lived it.

Those programs, small and underfunded, housed people at twelve times the rate of the robot program, at one-eighth the cost.

The robots gathered dust in warehouses. The people who could have actually helped—other people—never got the funding.

In 2032, America spent $27.3 billion to learn what unhoused individuals could have told us for free: what homeless people need most isn’t a robot companion. It’s a home.

Related Articles:

Why Robot Companions Can’t Replace Human Social Workers – Research on the limitations of AI-driven social services

The Ethics of Surveillance in Social Services – Analysis of privacy concerns when technology monitors vulnerable populations

Housing First vs. Treatment First: What Actually Works – Evidence-based approaches to addressing homelessness