By Futurist Thomas Frey

The Minority Report Problem Is Already Here

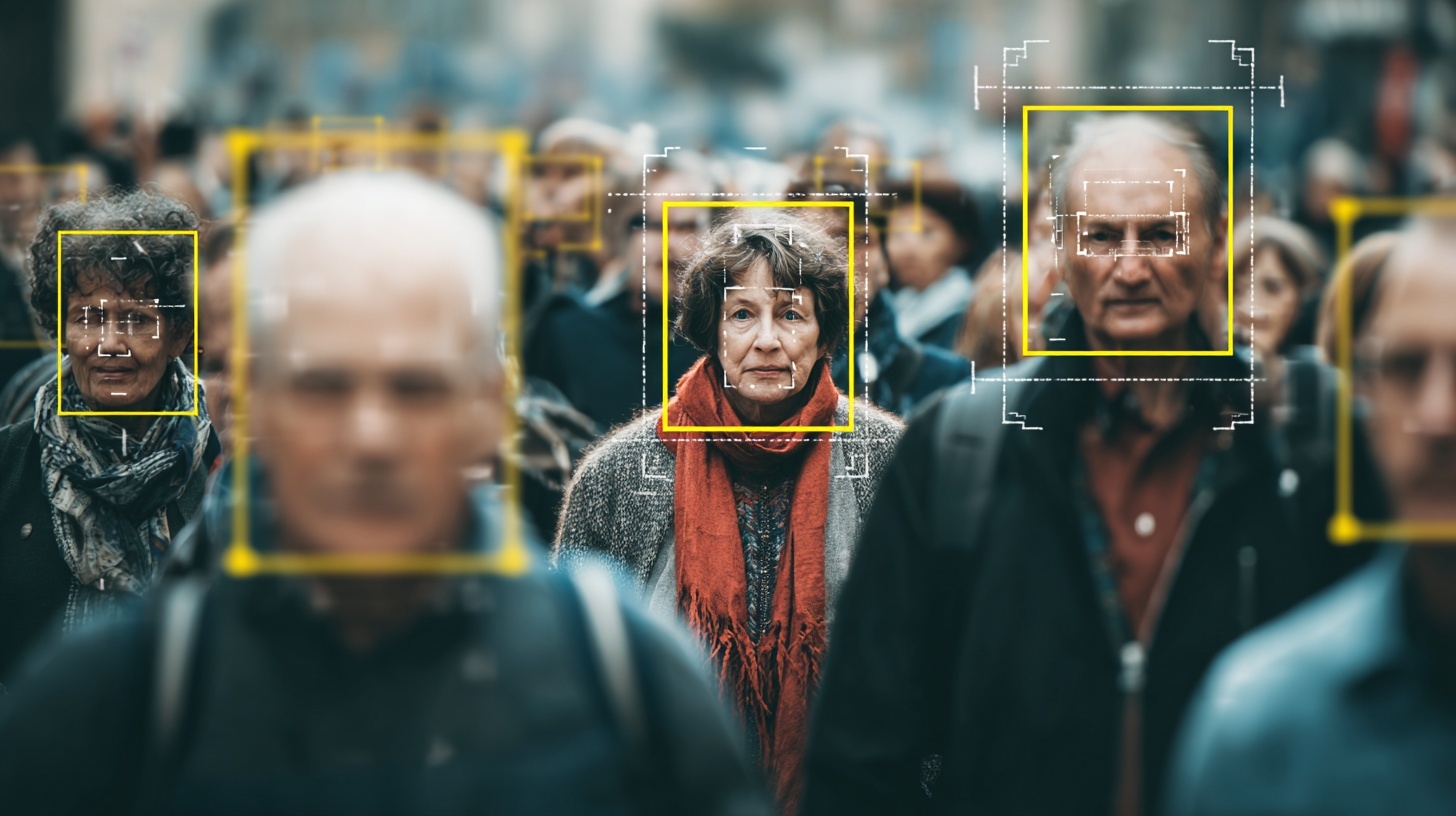

By 2032, most crimes won’t be stopped by catching perpetrators in the act—they’ll be interrupted before the act occurs because AI systems detected suspicious patterns and flagged the risk. Not science fiction. Not hypothetical. The technology exists now, and the deployment is already beginning in cities worldwide.

Sensors embedded throughout urban environments are learning to recognize motion patterns associated with criminal behavior. The way someone approaches an ATM. How they scan a parking lot. The body language preceding a mugging. Vocal stress signatures that indicate deception or violent intent. Anomaly behaviors that deviate from typical patterns in ways that correlate with criminal activity.

The AI doesn’t need to understand why these patterns predict crime—it just needs to recognize that they do. Machine learning systems trained on millions of hours of surveillance footage have become eerily good at predicting when someone is about to commit a crime, often minutes before it happens. Accuracy rates are already surpassing human intuition, and they’re improving exponentially.

The unusual part isn’t the technology—it’s the implication. We’re shifting from punishing crimes that happened to preventing crimes that might happen. From catching criminals to identifying people displaying pre-criminal patterns. From investigating acts to monitoring intentions. And nobody’s figured out the ethics of arresting someone for what they were about to do but didn’t actually do yet.

Continue reading… “When AI Starts Arresting You for Crimes You Haven’t Committed Yet”