Baxter is a robot meant to work with people in small manufacturing facilities.

Erik Brynjolfsson, a professor at the MIT Sloan School of Management, and his collaborator and coauthor Andrew McAfee have been arguing for the last year and a half that impressive advances in computer technology—from improved industrial robotics to automated translation services—are largely behind the sluggish employment growth of the last 10 to 15 years. Even more ominous for workers, the MIT academics foresee dismal prospects for many types of jobs as these powerful new technologies are increasingly adopted not only in manufacturing, clerical, and retail work but in professions such as law, financial services, education, and medicine.

That robots, automation, and software can replace people might seem obvious to anyone who’s worked in automotive manufacturing or as a travel agent. But Brynjolfsson and McAfee’s claim is more troubling and controversial. They believe that rapid technological change has been destroying jobs faster than it is creating them, contributing to the stagnation of median income and the growth of inequality in the United States. And, they suspect, something similar is happening in other technologically advanced countries.

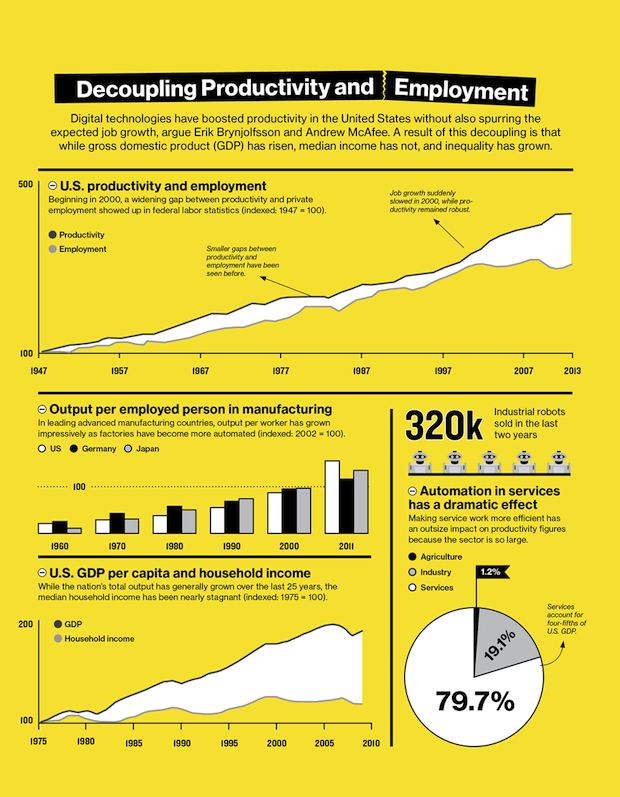

Perhaps the most damning piece of evidence, according to Brynjolfsson, is a chart that only an economist could love. In economics, productivity—the amount of economic value created for a given unit of input, such as an hour of labor—is a crucial indicator of growth and wealth creation. It is a measure of progress. On the chart Brynjolfsson likes to show, separate lines represent productivity and total employment in the United States. For years after World War II, the two lines closely tracked each other, with increases in jobs corresponding to increases in productivity. The pattern is clear: as businesses generated more value from their workers, the country as a whole became richer, which fueled more economic activity and created even more jobs. Then, beginning in 2000, the lines diverge; productivity continues to rise robustly, but employment suddenly wilts. By 2011, a significant gap appears between the two lines, showing economic growth with no parallel increase in job creation. Brynjolfsson and McAfee call it the “great decoupling.” And Brynjolfsson says he is confident that technology is behind both the healthy growth in productivity and the weak growth in jobs.

It’s a startling assertion because it threatens the faith that many economists place in technological progress. Brynjolfsson and McAfee still believe that technology boosts productivity and makes societies wealthier, but they think that it can also have a dark side: technological progress is eliminating the need for many types of jobs and leaving the typical worker worse off than before. Brynjolfsson can point to a second chart indicating that median income is failing to rise even as the gross domestic product soars. “It’s the great paradox of our era,” he says. “Productivity is at record levels, innovation has never been faster, and yet at the same time, we have a falling median income and we have fewer jobs. People are falling behind because technology is advancing so fast and our skills and organizations aren’t keeping up.”

Brynjolfsson and McAfee are not Luddites. Indeed, they are sometimes accused of being too optimistic about the extent and speed of recent digital advances. Brynjolfsson says they began writing Race Against the Machine, the 2011 book in which they laid out much of their argument, because they wanted to explain the economic benefits of these new technologies (Brynjolfsson spent much of the 1990s sniffing out evidence that information technology was boosting rates of productivity). But it became clear to them that the same technologies making many jobs safer, easier, and more productive were also reducing the demand for many types of human workers.

Anecdotal evidence that digital technologies threaten jobs is, of course, everywhere. Robots and advanced automation have been common in many types of manufacturing for decades. In the United States and China, the world’s manufacturing powerhouses, fewer people work in manufacturing today than in 1997, thanks at least in part to automation. Modern automotive plants, many of which were transformed by industrial robotics in the 1980s, routinely use machines that autonomously weld and paint body parts—tasks that were once handled by humans. Most recently, industrial robots like Rethink Robotics’ Baxter (see “The Blue-Collar Robot,” May/June 2013), more flexible and far cheaper than their predecessors, have been introduced to perform simple jobs for small manufacturers in a variety of sectors. The website of a Silicon Valley startup called Industrial Perception features a video of the robot it has designed for use in warehouses picking up and throwing boxes like a bored elephant. And such sensations as Google’s driverless car suggest what automation might be able to accomplish someday soon.

A less dramatic change, but one with a potentially far larger impact on employment, is taking place in clerical work and professional services. Technologies like the Web, artificial intelligence, big data, and improved analytics—all made possible by the ever increasing availability of cheap computing power and storage capacity—are automating many routine tasks. Countless traditional white-collar jobs, such as many in the post office and in customer service, have disappeared. W. Brian Arthur, a visiting researcher at the Xerox Palo Alto Research Center’s intelligence systems lab and a former economics professor at Stanford University, calls it the “autonomous economy.” It’s far more subtle than the idea of robots and automation doing human jobs, he says: it involves “digital processes talking to other digital processes and creating new processes,” enabling us to do many things with fewer people and making yet other human jobs obsolete.

It is this onslaught of digital processes, says Arthur, that primarily explains how productivity has grown without a significant increase in human labor. And, he says, “digital versions of human intelligence” are increasingly replacing even those jobs once thought to require people. “It will change every profession in ways we have barely seen yet,” he warns.

McAfee, associate director of the MIT Center for Digital Business at the Sloan School of Management, speaks rapidly and with a certain awe as he describes advances such as Google’s driverless car. Still, despite his obvious enthusiasm for the technologies, he doesn’t see the recently vanished jobs coming back. The pressure on employment and the resulting inequality will only get worse, he suggests, as digital technologies—fueled with “enough computing power, data, and geeks”—continue their exponential advances over the next several decades. “I would like to be wrong,” he says, “but when all these science-fiction technologies are deployed, what will we need all the people for?”

New Economy?

But are these new technologies really responsible for a decade of lackluster job growth? Many labor economists say the data are, at best, far from conclusive. Several other plausible explanations, including events related to global trade and the financial crises of the early and late 2000s, could account for the relative slowness of job creation since the turn of the century. “No one really knows,” says Richard Freeman, a labor economist at Harvard University. That’s because it’s very difficult to “extricate” the effects of technology from other macroeconomic effects, he says. But he’s skeptical that technology would change a wide range of business sectors fast enough to explain recent job numbers.

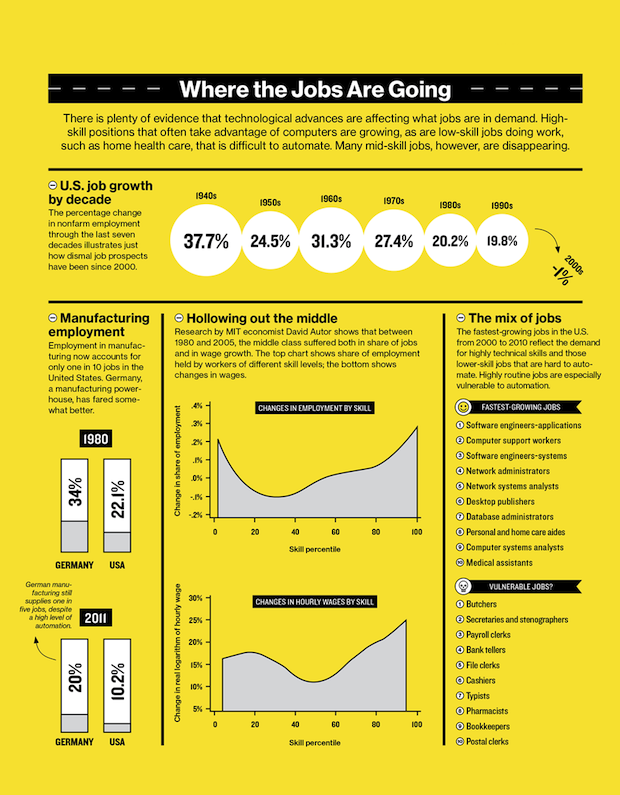

Employment trends have polarized the workforce and hollowed out the middle class.

David Autor, an economist at MIT who has extensively studied the connections between jobs and technology, also doubts that technology could account for such an abrupt change in total employment. “There was a great sag in employment beginning in 2000. Something did change,” he says. “But no one knows the cause.” Moreover, he doubts that productivity has, in fact, risen robustly in the United States in the past decade (economists can disagree about that statistic because there are different ways of measuring and weighing economic inputs and outputs). If he’s right, it raises the possibility that poor job growth could be simply a result of a sluggish economy. The sudden slowdown in job creation “is a big puzzle,” he says, “but there’s not a lot of evidence it’s linked to computers.”

To be sure, Autor says, computer technologies are changing the types of jobs available, and those changes “are not always for the good.” At least since the 1980s, he says, computers have increasingly taken over such tasks as bookkeeping, clerical work, and repetitive production jobs in manufacturing—all of which typically provided middle-class pay. At the same time, higher-paying jobs requiring creativity and problem-solving skills, often aided by computers, have proliferated. So have low-skill jobs: demand has increased for restaurant workers, janitors, home health aides, and others doing service work that is nearly impossible to automate. The result, says Autor, has been a “polarization” of the workforce and a “hollowing out” of the middle class—something that has been happening in numerous industrialized countries for the last several decades. But “that is very different from saying technology is affecting the total number of jobs,” he adds. “Jobs can change a lot without there being huge changes in employment rates.”

What’s more, even if today’s digital technologies are holding down job creation, history suggests that it is most likely a temporary, albeit painful, shock; as workers adjust their skills and entrepreneurs create opportunities based on the new technologies, the number of jobs will rebound. That, at least, has always been the pattern. The question, then, is whether today’s computing technologies will be different, creating long-term involuntary unemployment.

At least since the Industrial Revolution began in the 1700s, improvements in technology have changed the nature of work and destroyed some types of jobs in the process. In 1900, 41 percent of Americans worked in agriculture; by 2000, it was only 2 percent. Likewise, the proportion of Americans employed in manufacturing has dropped from 30 percent in the post–World War II years to around 10 percent today—partly because of increasing automation, especially during the 1980s.

While such changes can be painful for workers whose skills no longer match the needs of employers, Lawrence Katz, a Harvard economist, says that no historical pattern shows these shifts leading to a net decrease in jobs over an extended period. Katz has done extensive research on how technological advances have affected jobs over the last few centuries—describing, for example, how highly skilled artisans in the mid-19th century were displaced by lower-skilled workers in factories. While it can take decades for workers to acquire the expertise needed for new types of employment, he says, “we never have run out of jobs. There is no long-term trend of eliminating work for people. Over the long term, employment rates are fairly stable. People have always been able to create new jobs. People come up with new things to do.”

Still, Katz doesn’t dismiss the notion that there is something different about today’s digital technologies—something that could affect an even broader range of work. The question, he says, is whether economic history will serve as a useful guide. Will the job disruptions caused by technology be temporary as the workforce adapts, or will we see a science-fiction scenario in which automated processes and robots with superhuman skills take over a broad swath of human tasks? Though Katz expects the historical pattern to hold, it is “genuinely a question,” he says. “If technology disrupts enough, who knows what will happen?”

Dr. Watson

To get some insight into Katz’s question, it is worth looking at how today’s most advanced technologies are being deployed in industry. Though these technologies have undoubtedly taken over some human jobs, finding evidence of workers being displaced by machines on a large scale is not all that easy. One reason it is difficult to pinpoint the net impact on jobs is that automation is often used to make human workers more efficient, not necessarily to replace them. Rising productivity means businesses can do the same work with fewer employees, but it can also enable the businesses to expand production with their existing workers, and even to enter new markets.

Take the bright-orange Kiva robot, a boon to fledgling e-commerce companies. Created and sold by Kiva Systems, a startup that was founded in 2002 and bought by Amazon for $775 million in 2012, the robots are designed to scurry across large warehouses, fetching racks of ordered goods and delivering the products to humans who package the orders. In Kiva’s large demonstration warehouse and assembly facility at its headquarters outside Boston, fleets of robots move about with seemingly endless energy: some newly assembled machines perform tests to prove they’re ready to be shipped to customers around the world, while others wait to demonstrate to a visitor how they can almost instantly respond to an electronic order and bring the desired product to a worker’s station.

A warehouse equipped with Kiva robots can handle up to four times as many orders as a similar unautomated warehouse, where workers might spend as much as 70 percent of their time walking about to retrieve goods. (Coincidentally or not, Amazon bought Kiva soon after a press report revealed that workers at one of the retailer’s giant warehouses often walked more than 10 miles a day.)

Despite the labor-saving potential of the robots, Mick Mountz, Kiva’s founder and CEO, says he doubts the machines have put many people out of work or will do so in the future. For one thing, he says, most of Kiva’s customers are e-commerce retailers, some of them growing so rapidly they can’t hire people fast enough. By making distribution operations cheaper and more efficient, the robotic technology has helped many of these retailers survive and even expand. Before founding Kiva, Mountz worked at Webvan, an online grocery delivery company that was one of the 1990s dot-com era’s most infamous flameouts. He likes to show the numbers demonstrating that Webvan was doomed from the start; a $100 order cost the company $120 to ship. Mountz’s point is clear: something as mundane as the cost of materials handling can consign a new business to an early death. Automation can solve that problem.

Meanwhile, Kiva itself is hiring. Orange balloons—the same color as the robots—hover over multiple cubicles in its sprawling office, signaling that the occupants arrived within the last month. Most of these new employees are software engineers: while the robots are the company’s poster boys, its lesser-known innovations lie in the complex algorithms that guide the robots’ movements and determine where in the warehouse products are stored. These algorithms help make the system adaptable. It can learn, for example, that a certain product is seldom ordered, so it should be stored in a remote area.

Though advances like these suggest how some aspects of work could be subject to automation, they also illustrate that humans still excel at certain tasks—for example, packaging various items together. Many of the traditional problems in robotics—such as how to teach a machine to recognize an object as, say, a chair—remain largely intractable and are especially difficult to solve when the robots are free to move about a relatively unstructured environment like a factory or office.

Techniques using vast amounts of computational power have gone a long way toward helping robots understand their surroundings, but John Leonard, a professor of engineering at MIT and a member of its Computer Science and Artificial Intelligence Laboratory (CSAIL), says many familiar difficulties remain. “Part of me sees accelerating progress; the other part of me sees the same old problems,” he says. “I see how hard it is to do anything with robots. The big challenge is uncertainty.” In other words, people are still far better at dealing with changes in their environment and reacting to unexpected events.

For that reason, Leonard says, it is easier to see how robots could work with humans than on their own in many applications. “People and robots working together can happen much more quickly than robots simply replacing humans,” he says. “That’s not going to happen in my lifetime at a massive scale. The semiautonomous taxi will still have a driver.”

One of the friendlier, more flexible robots meant to work with humans is Rethink’s Baxter. The creation of Rodney Brooks, the company’s founder, Baxter needs minimal training to perform simple tasks like picking up objects and moving them to a box. It’s meant for use in relatively small manufacturing facilities where conventional industrial robots would cost too much and pose too much danger to workers. The idea, says Brooks, is to have the robots take care of dull, repetitive jobs that no one wants to do.

It’s hard not to instantly like Baxter, in part because it seems so eager to please. The “eyebrows” on its display rise quizzically when it’s puzzled; its arms submissively and gently retreat when bumped. Asked about the claim that such advanced industrial robots could eliminate jobs, Brooks answers simply that he doesn’t see it that way. Robots, he says, can be to factory workers as electric drills are to construction workers: “It makes them more productive and efficient, but it doesn’t take jobs.”

The machines created at Kiva and Rethink have been cleverly designed and built to work with people, taking over the tasks that the humans often don’t want to do or aren’t especially good at. They are specifically designed to enhance these workers’ productivity. And it’s hard to see how even these increasingly sophisticated robots will replace humans in most manufacturing and industrial jobs anytime soon. But clerical and some professional jobs could be more vulnerable. That’s because the marriage of artificial intelligence and big data is beginning to give machines a more humanlike ability to reason and to solve many new types of problems.

Even if the economy is only going through a transition, it is an extremely painful one for many.

In the tony northern suburbs of New York City, IBM Research is pushing super-smart computing into the realms of such professions as medicine, finance, and customer service. IBM’s efforts have resulted in Watson, a computer system best known for beating human champions on the game show Jeopardy! in 2011. That version of Watson now sits in a corner of a large data center at the research facility in Yorktown Heights, marked with a glowing plaque commemorating its glory days. Meanwhile, researchers there are already testing new generations of Watson in medicine, where the technology could help physicians diagnose diseases like cancer, evaluate patients, and prescribe treatments.

IBM likes to call it cognitive computing. Essentially, Watson uses artificial-intelligence techniques, advanced natural-language processing and analytics, and massive amounts of data drawn from sources specific to a given application (in the case of health care, that means medical journals, textbooks, and information collected from the physicians or hospitals using the system). Thanks to these innovative techniques and huge amounts of computing power, it can quickly come up with “advice”—for example, the most recent and relevant information to guide a doctor’s diagnosis and treatment decisions.

Despite the system’s remarkable ability to make sense of all that data, it’s still early days for Dr. Watson. While it has rudimentary abilities to “learn” from specific patterns and evaluate different possibilities, it is far from having the type of judgment and intuition a physician often needs. But IBM has also announced it will begin selling Watson’s services to customer-support call centers, which rarely require human judgment that’s quite so sophisticated. IBM says companies will rent an updated version of Watson for use as a “customer service agent” that responds to questions from consumers; it has already signed on several banks. Automation is nothing new in call centers, of course, but Watson’s improved capacity for natural-language processing and its ability to tap into a large amount of data suggest that this system could speak plainly with callers, offering them specific advice on even technical and complex questions. It’s easy to see it replacing many human holdouts in its new field.

Digital Losers

The contention that automation and digital technologies are partly responsible for today’s lack of jobs has obviously touched a raw nerve for many worried about their own employment. But this is only one consequence of what Brynjolfsson and McAfee see as a broader trend. The rapid acceleration of technological progress, they say, has greatly widened the gap between economic winners and losers—the income inequalities that many economists have worried about for decades. Digital technologies tend to favor “superstars,” they point out. For example, someone who creates a computer program to automate tax preparation might earn millions or billions of dollars while eliminating the need for countless accountants.

New technologies are “encroaching into human skills in a way that is completely unprecedented,” McAfee says, and many middle-class jobs are right in the bull’s-eye; even relatively high-skill work in education, medicine, and law is affected. “The middle seems to be going away,” he adds. “The top and bottom are clearly getting farther apart.” While technology might be only one factor, says McAfee, it has been an “underappreciated” one, and it is likely to become increasingly significant.

Not everyone agrees with Brynjolfsson and McAfee’s conclusions—particularly the contention that the impact of recent technological change could be different from anything seen before. But it’s hard to ignore their warning that technology is widening the income gap between the tech-savvy and everyone else. And even if the economy is only going through a transition similar to those it’s endured before, it is an extremely painful one for many workers, and that will have to be addressed somehow. Harvard’s Katz has shown that the United States prospered in the early 1900s in part because secondary education became accessible to many people at a time when employment in agriculture was drying up. The result, at least through the 1980s, was an increase in educated workers who found jobs in the industrial sectors, boosting incomes and reducing inequality. Katz’s lesson: painful long-term consequences for the labor force do not follow inevitably from technological changes.

Brynjolfsson himself says he’s not ready to conclude that economic progress and employment have diverged for good. “I don’t know whether we can recover, but I hope we can,” he says. But that, he suggests, will depend on recognizing the problem and taking steps such as investing more in the training and education of workers.

“We were lucky and steadily rising productivity raised all boats for much of the 20th century,” he says. “Many people, especially economists, jumped to the conclusion that was just the way the world worked. I used to say that if we took care of productivity, everything else would take care of itself; it was the single most important economic statistic. But that’s no longer true.” He adds, “It’s one of the dirty secrets of economics: technology progress does grow the economy and create wealth, but there is no economic law that says everyone will benefit.” In other words, in the race against the machine, some are likely to win while many others lose.