The newest groundbreaking AI tech? Unsupervised image-to-image translation. It allows you to auto-generate a different photo based off of an input photo. Essentially, it’s lying to your eyes.

The research paper on the subject of image manipulation, published today by Neural Information Processing Systems (NIPS), isn’t the first to broach the topic of AI’s visual trickery, but it does come with one of the more stunning examples of the same.

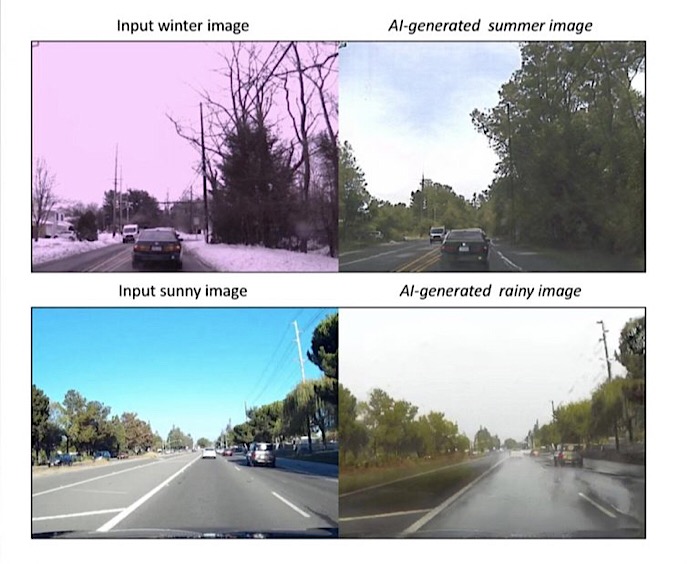

Changing Seasons

Titled “Unsupervised Image-to-Image Translation Networks,” by researchers Ming-Yu Liu, Thomas Breuel, and Jan Kautz, the study compares the current image translation methods with its own version:

“Since there exists an infinite set of joint distributions that can arrive the given marginal distributions, one could infer nothing about the joint distribution from the marginal distributions without additional assumptions. To address the problem, we make a shared-latent space assumption and propose an unsupervised image-to-image translation framework based on Coupled GANs.”

This proposed framework was paired with the competing technologies in the paper, alongside “high quality image translation results” in a variety of areas, including animal images, face images, and street scene images (pictured above).

Video Isn’t Safe Either

Stanford University has developed the software needed to seamlessly replace the mouth of anyone in existing footage with the mouth of an actor who can lip-sync anything he or she wants. Check out the video above for an example of the sweeping power at our disposal.

Audio to accompany the visuals can’t yet be generated at the same level of quality, though researchers at the University of Washington have paired audio from Obama with a different scenes of the president to make it appear as though he is giving the same speech on two different days.

Needless to say, as much as we have discussed “fake news” this year, the phenomenon is posed to explode in the coming years. And we have AI to thank for it.

Via Tech.co