For centuries, the human brain has been described as the most complex object in the known universe. And while modern neuroscience has mapped countless neural pathways, the deepest regions of the brain—structures like the basal ganglia and the thalamus—remain a stubborn frontier. These areas govern movement, emotion, motivation, and decision-making, yet when they go awry, they spark conditions as devastating as Parkinson’s disease, depression, and essential tremor.

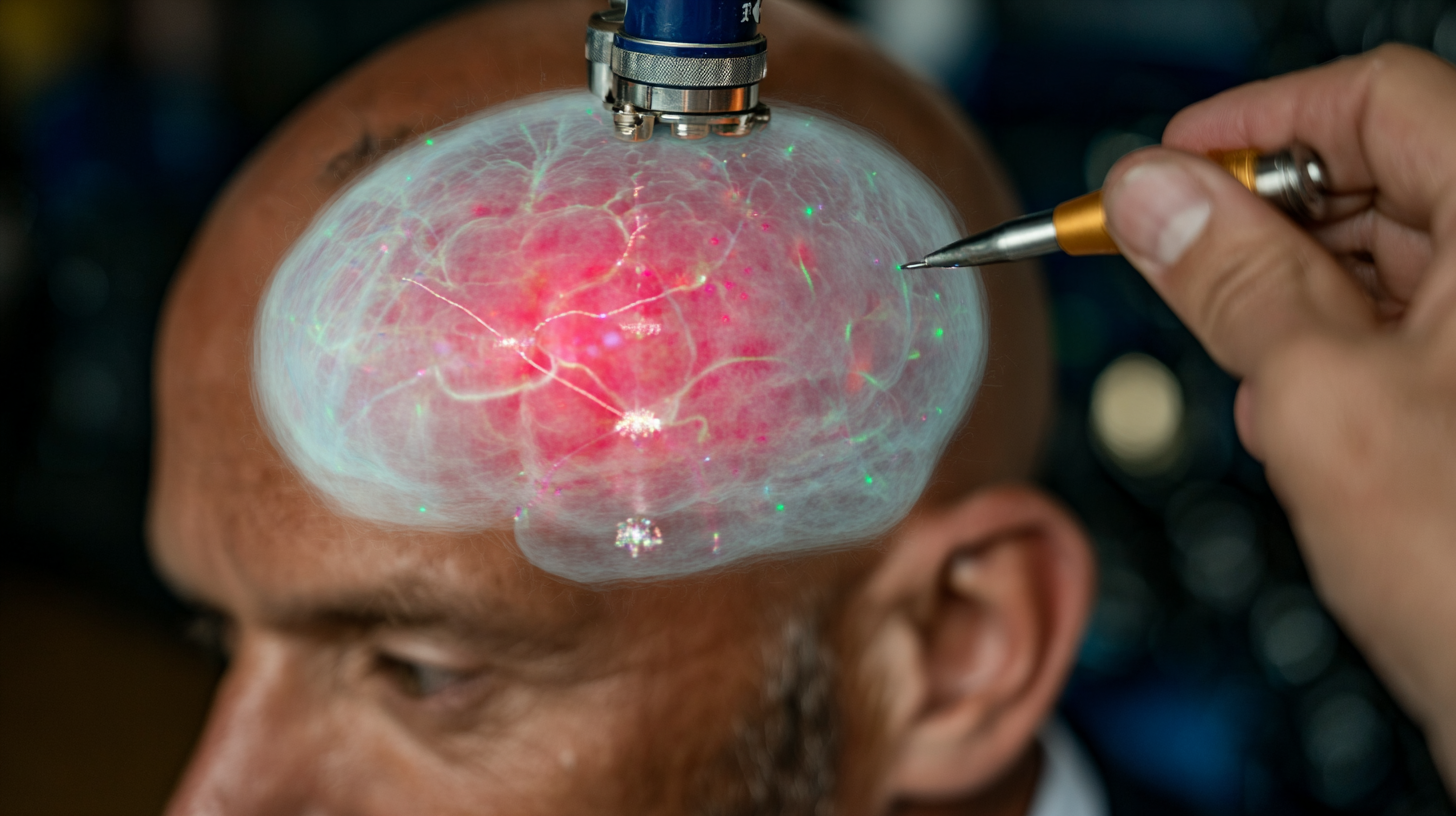

The problem has always been access. To study or influence these deep-brain circuits, medicine has relied on invasive surgery: drilling holes, implanting electrodes, or burning away malfunctioning tissue. These procedures can be life-changing, but they carry enormous risks. What if there were a way to reach the same circuits with no scalpel, no implant, and no irreversible damage?

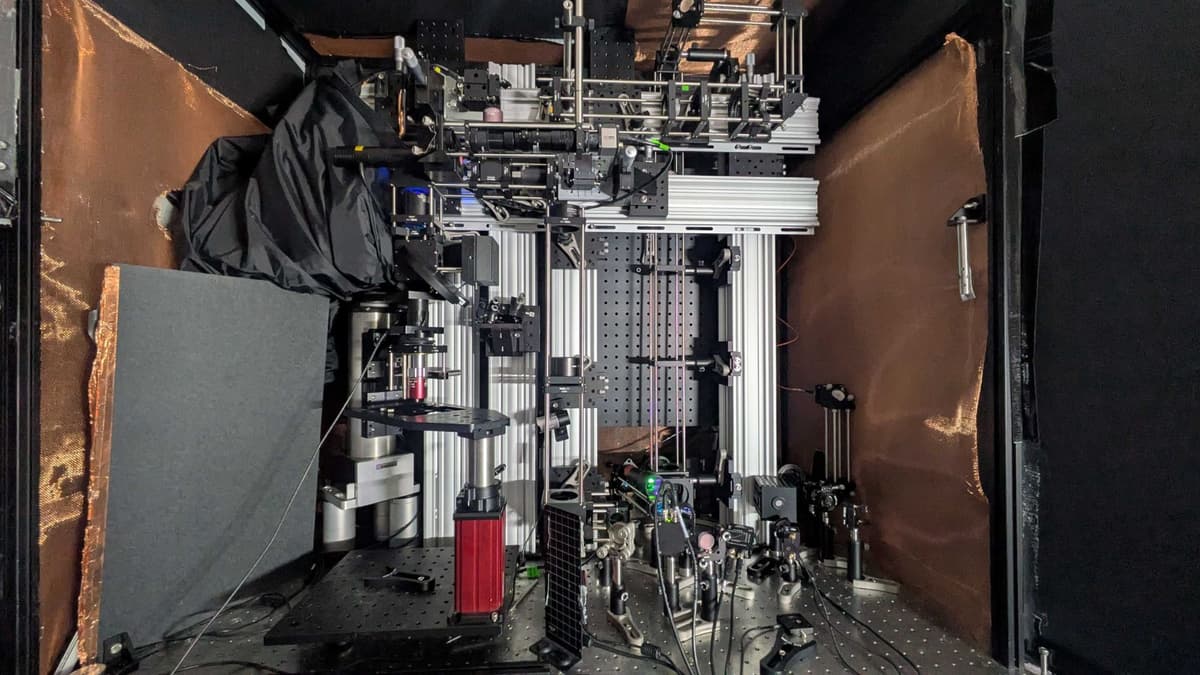

Continue reading… “The Ultrasound Helmet: A Non-Surgical Gateway Into the Deep Brain”